VocaLiST: An Audio-Visual Synchronisation Model for Lips and Voices

The paper has been accepted to Interspeech 2022!

Abstract

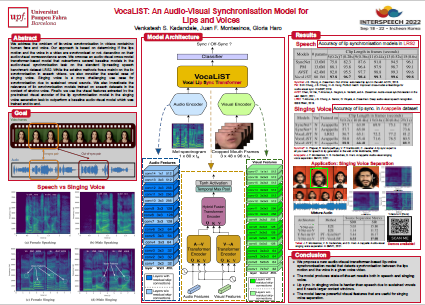

In this paper, we address the problem of lip-voice synchronisation in videos containing human face and voice. Our approach is based on determining if the lips motion and the voice in a video are synchronised or not, depending on their audio-visual correspondence score. We propose an audio-visual cross-modal transformer-based model that outperforms several baseline models in the audio-visual synchronisation task on the standard lip-reading speech benchmark dataset LRS2. While the existing methods focus mainly on the lip synchronisation in speech videos, we also consider the special case of singing voice. Singing voice is a more challenging use case for synchronisation due to sustained vowel sounds. We also investigate the relevance of lip synchronisation models trained on speech datasets in the context of singing voice. Finally, we use the frozen visual features learned by our lip synchronisation model in the singing voice separation task to outperform a baseline audio-visual model which was trained end-to-end. The demos, source code and the pre-trained model will be made available on https://ipcv.github.io/VocaLiST/

Citation

@inproceedings{kadandale2022vocalist, title={VocaLiST: An Audio-Visual Synchronisation Model for Lips and Voices}, author={Kadandale, Venkatesh S and Montesinos, Juan F and Haro, Gloria}, booktitle={Interspeech}, pages={3128--3132}, year={2022} }

Acknowledgements

We acknowledge support by MICINN/FEDER UE project PID2021-127643NB-I00; H2020-MSCA-RISE-2017 project 777826 NoMADS. V. S. K. has received support through “la Caixa” Foundation (ID 100010434), fellowship code: LCF/BQ/DI18/11660064 and the Marie SkłodowskaCurie grant agreement No. 713673. J. F. M. acknowledges support by FPI scholarship PRE2018-083920. We would like to thank Honglie Chen (VGG, Oxford) and Soo-Whan Chung (Naver Corporation) for the insightful discussions on the topic.